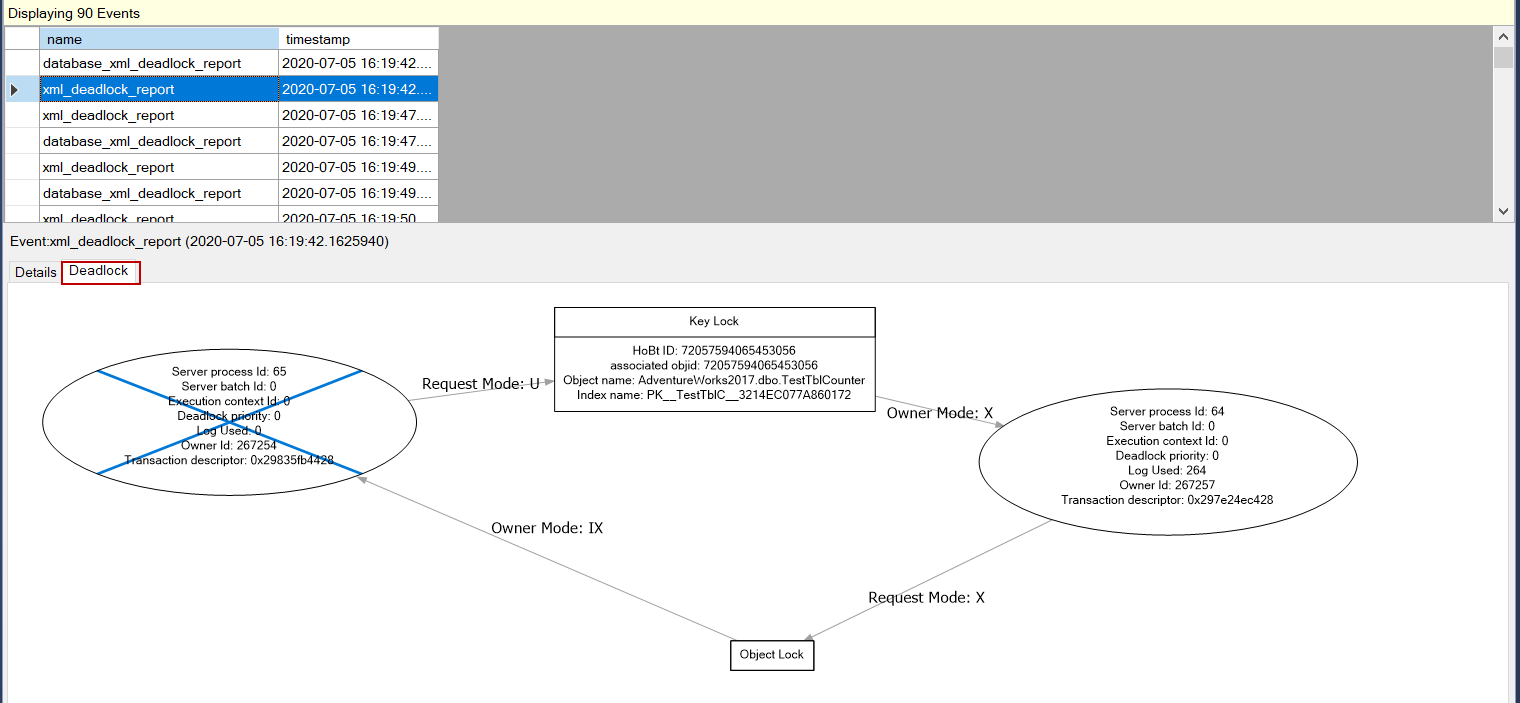

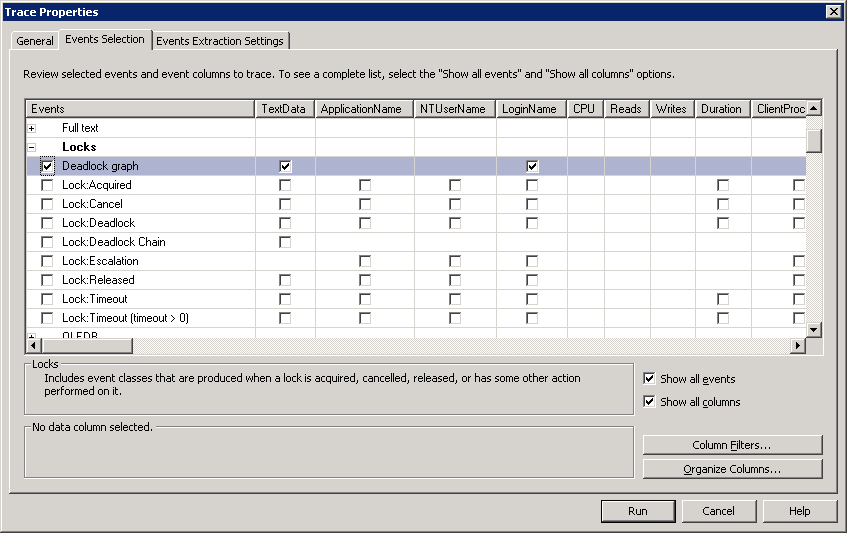

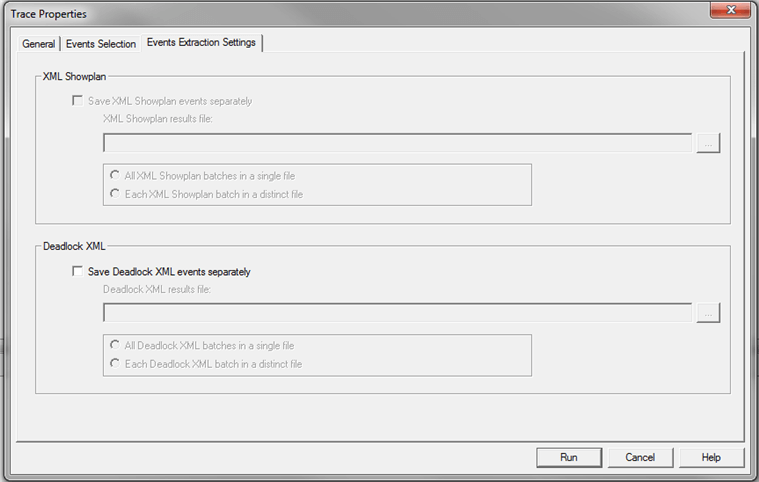

Therefore, this is a little sum up about my lessons learnt.ĭepending on the number of queries running at the same time with SELECT, UPDATE and DELETE, SQL Server decides, which data is locked, read, waited for, overwritten or inserted. I must admit, I’m not the export in that area. Maybe the unique constraint indexes have additional functions when loading data to it. Although I have used all measures below, it happened. This was the reason to investigate further about why it did happen. Lately after working on a project with huge amount of data, I got hit occasionally with duplicate rows in my hubs and links. But I have a regular report which checks, if the constrains are still intact. The application should make sure that everything is fine. At least maybe for the next year until I find another solution.Īs you know I dropped all indexes of my data warehouse and therefore also unique constrains on my tables. Cursors are covered in a prior module in this course.The next lesson introduces you to issues relating to locking, called deadlocks.After the last Index blog post here another technical issue I recently thought to have solved. This is because a cursor allows you to control an individual record in a recordset. Although this is not the default locking method used by MS SQL Server 2012, it can be easily implemented by using cursors. This introduces the possibility that two transactions modify data at the same time. The records are locked only when they are updated. This allows users to make changes to the database.

Optimistic locking: A method whereby records are NOT locked when they are read within a transaction.This is the default locking method used by MS SQL Server 2000. This ensures that updates succeed, unless a deadlock occurs. Pessimistic locking:Records are locked when they are read within a transaction, preventing any user from making any changes before the transaction is completed.You must decide between two locking strategies to implement in your database, as follows: In general, Optimistic locking is preferred in situations where contention is expected to be low and Pessimistic locking is preferred in situations where contention is expected to be high.Įven though SQL Server handles locking automatically for you, do not think that you are going to get off that easily. This can help to prevent conflicts, but it can also lead to blocking and deadlocks if not used carefully. Other transactions that attempt to access the locked record will be blocked until the lock is released. This approach prevents multiple transactions from reading or updating a record simultaneously by placing a lock on the record as soon as it is accessed. Pessimistic record locking is used when it is expected that conflicts between concurrent transactions will be frequent. If a conflict is detected, the transaction must be rolled back and retried. This approach allows multiple transactions to read and update a record simultaneously, but it requires each transaction to check for conflicts before committing its changes.

Optimistic record locking is used when it is expected that conflicts between concurrent transactions will be rare. Bulk Update:used when bulk copying data with the BCP program (Bulk copy is discussed in another course in this series.) Optimistic versus Pessimistic Record locking in SQL-Server.Schema: used when the schema of a table changes.Intent: establishes a locking hierarchy by acting as a queue for transactions that have the intention of achieving an exclusive lock.No other lock will be granted by SQL Server if there is an exclusive lock present.) Exclusive: used in data modification operations, such as INSERT, UPDATE, and DELETE Transact-SQL statements (This mode makes sure that two transactions cannot modify the same data at the same time.(Deadlocking will be discussed in a later lesson in this module.)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed